Introduction

Traditional databases are great at exact matches. They can tell you which rows have a specific user ID, status, or keyword. But they struggle the moment you ask a fuzzy question like "Find me policies similar to this customer complaint" or "Show product reviews that talk about slow shipping."

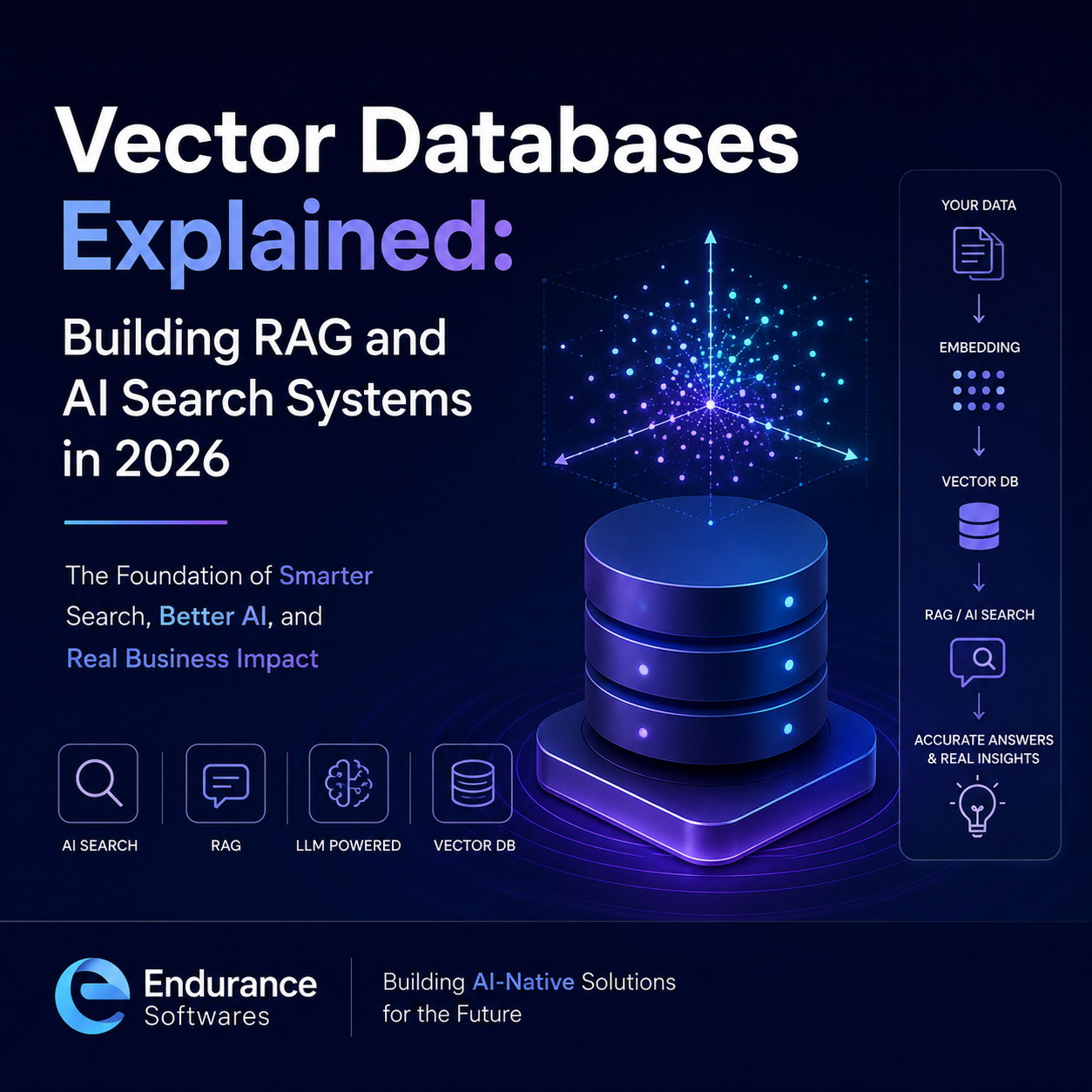

That is where vector databases come in. They store information as high-dimensional vectors, also called embeddings, that represent the meaning of text, images, code, audio, or any other content. With these embeddings, AI systems can search by similarity, not just by keyword.

In 2026, vector databases sit at the center of almost every serious AI product, from chatbots and copilots to semantic search, document Q&A, recommendation engines, and intelligent SaaS dashboards.

Simple explanation: A vector database stores meaning, not text. It lets AI systems search and reason over your data using similarity instead of exact matches.

What Is a Vector Database?

A vector database is a specialized data store designed to index and search vectors. A vector is just a list of numbers that captures the meaning of a piece of content. Two pieces of text with similar meaning will produce vectors that are close to each other in space.

SQL searches for exact values. Vector DBs search for meaning.

A SQL database answers "Which orders have status = pending?". A vector database answers "Which support tickets are similar to this new complaint?".

What a Vector Database Does

- Stores embeddings generated by an AI model.

- Indexes them with algorithms like HNSW or IVF for fast lookup.

- Performs approximate nearest neighbor search at scale.

- Returns top-K most similar items in milliseconds.

- Filters and combines vector search with metadata (hybrid search).

Embeddings 101

Embeddings are the bridge between your data and a vector database. An embedding model converts a piece of content into a fixed-length numerical vector that captures its semantic meaning.

Input Content

Text, image, audio, code, or any structured information.

Embedding Model

Models like text-embedding-3, Cohere, or open-source MiniLM/E5.

Vector Output

A fixed-length array such as 768, 1024, or 1536 numbers.

Store in Vector DB

Save the vector along with metadata such as source, type, and tags.

Query with Embedding

Embed the user query and search for the nearest neighbors.

Use Results

Pass top results to an LLM, rank them, or render them as search.

Choosing the Right Embedding Model

- Use larger models for high-quality semantic search and RAG.

- Use smaller open-source models when cost and latency matter most.

- Stay consistent. Always embed queries and documents with the same model.

- Re-embed your data whenever you upgrade the embedding model.

How Retrieval-Augmented Generation (RAG) Works

RAG is the most common pattern that uses vector databases in production. It helps large language models answer questions grounded in your private data instead of guessing or hallucinating.

LLMs do not know your business. RAG fixes that.

Instead of fine-tuning a model on every change in your docs, RAG retrieves the most relevant context at query time and lets the model answer with that context.

Ingest Data

Pull documents, tickets, code, or PDFs from your sources.

Chunk Content

Split into smaller, meaningful chunks (sentences, sections, paragraphs).

Embed Chunks

Convert each chunk into a vector and store with metadata.

Embed Query

When a user asks something, embed their query with the same model.

Retrieve Top-K

Pull the K most similar chunks from the vector database.

Generate Answer

Pass query + retrieved chunks to an LLM and return a grounded answer.

Reference Architecture for a Production RAG System

A real-world RAG system is more than "embed and search." You need ingestion pipelines, evaluation, caching, observability, and guardrails.

Ingestion Layer

Connect to sources like S3, Google Drive, Notion, databases, websites, or internal APIs. Normalize and clean content.

Chunking Strategy

Use semantic-aware chunking with overlap. Keep chunk size balanced between precision and context.

Embedding Service

Batch embeddings asynchronously. Track model version per chunk for safe upgrades.

Vector Store

Pick a vector DB based on scale, hosting, hybrid search, and metadata filtering needs.

Retriever and Reranker

Combine vector search with BM25 or rerankers like Cohere Rerank for higher precision.

LLM and Guardrails

Compose grounded prompts, enforce citations, validate outputs, and add safety filters.

Need a Production-Grade RAG System?

Endurance Softwares helps SaaS companies and startups build RAG pipelines, AI search, and vector-database-powered products with strong evaluation, observability, and security.

Design My RAG StackPopular Vector Databases in 2026

There is no single "best" vector database. The right choice depends on scale, hosting model, hybrid search needs, and existing infrastructure.

Pinecone

Fully managed, serverless vector database with strong filtering. Great when you want simplicity and zero infra work.

Weaviate

Open-source with built-in hybrid search, modules, and rerankers. Good for teams that want flexibility.

Qdrant

High-performance open-source store with rich filtering, payloads, and sharding. Strong for self-hosting.

Milvus

Enterprise-grade open-source DB built for billions of vectors and multi-tenant workloads.

pgvector

Postgres extension that adds vector search to your existing relational database. Excellent for early-stage products.

Elastic / OpenSearch

Mature search engines with vector support. A solid pick when you already rely on them for BM25 and analytics.

How to Pick One

- Start with pgvector if your data is small and already in Postgres.

- Use Pinecone or Weaviate when you want managed scale and hybrid search.

- Pick Qdrant or Milvus when self-hosting and tight control matter.

- Combine with Elastic/OpenSearch if you need strong full-text + vector.

Real-World Use Cases

Vector databases unlock new product experiences that were impractical with traditional search alone.

AI Chatbots Over Your Docs

Internal help bots, customer-facing assistants, and onboarding copilots grounded in product documentation.

Semantic Search in SaaS

Search across tickets, contracts, projects, or notes using natural language instead of exact keywords.

Recommendations

Suggest similar products, articles, jobs, or candidates using vector similarity over rich content.

Code Search and AI IDEs

Search across large codebases by intent. Power AI coding copilots with context-aware retrieval.

Compliance and Legal

Find similar clauses, prior cases, or risky patterns across thousands of documents in seconds.

Customer Support AI

Suggest answers, summarize tickets, and surface similar resolved issues to support agents in real time.

Common Pitfalls to Avoid

Most RAG projects fail not because of the model, but because of weak retrieval. These are the issues we see most often when teams try to ship vector search into production.

Poor chunking strategy.

Splitting blindly by character count destroys context. Use semantic or structural chunking with overlap and meaningful boundaries.

Ignoring metadata and filters.

Pure vector similarity is not enough. Use filters like tenant ID, language, doc type, and recency to keep results relevant and secure.

No evaluation pipeline.

Without offline eval sets and online metrics, you cannot tell if changes improve or break retrieval. Treat retrieval like a tested system.

Skipping reranking.

Top-K vector results are noisy. A reranker model can dramatically improve precision before passing results to the LLM.

Forgetting cost and latency budgets.

Embedding millions of chunks and using large LLMs adds up. Plan for caching, batch embeddings, and smaller models where possible.

How Endurance Softwares Builds RAG and Vector Search Systems

We design vector-database-backed AI systems with a strong focus on retrieval quality, cost, security, and product fit.

Use Case Discovery

We map your data, users, and core questions to a clear AI use case.

Data and Chunking Plan

We design ingestion, cleaning, and chunking based on your content.

Stack Selection

We pick the right embedding model, vector DB, and orchestration layer.

Retrieval and Reranking

We tune retrieval strategy, hybrid search, and rerankers for accuracy.

Evaluation Suite

We build eval datasets, regression tests, and quality dashboards.

Launch and Iterate

We ship, monitor, and continuously improve based on real usage.

Frequently Asked Questions

Ready to Add AI Search or RAG to Your Product?

Whether you need semantic search, a document chatbot, an internal copilot, or a full RAG platform, Endurance Softwares can help you design, build, and scale it on the right vector database stack.

Book a Free AI ConsultationConclusion

Vector databases are no longer an experimental piece of AI infrastructure. In 2026, they are the foundation of serious AI products that need to reason over private data, deliver semantic search, and ground LLMs with real context.

The teams that win with AI are not the ones with the biggest model. They are the ones with the cleanest data, the strongest retrieval, and the most disciplined evaluation. A well-designed vector database layer is what makes that possible.

If you are planning to add AI search, RAG, or intelligent assistants to your SaaS or business product, start with the data and retrieval layer first. Everything else builds on top of it.